Containment Protocol

Monitoring consists of the following:

- Bi-weekly collection of sample outputs from Bellhaus's public-facing portfolio and social media accounts, archived locally with timestamps and prompt metadata where available.

- Periodic spectral analysis of published renders to track chromatic distribution trends over time. A baseline spectrum was established from Bellhaus's portfolio as of September 2025. All subsequent samples are compared against this baseline using CIE Lab* color distance metrics.

- Keyword monitoring across architectural visualization forums and industry publications for reports of similar unprompted aesthetic convergence in diffusion-based generation tools.

Should the chromatic convergence documented in this entry be observed in outputs from models hosted on other Halcyon Data Systems infrastructure nodes, this entry is to be escalated to Tier 2 and reclassified with the additional category tag CHORUS. Criteria for escalation are defined in Addendum GDW002-A.

No direct contact with Bellhaus personnel has been attempted. Information in this entry is derived from publicly available materials, forum postings, and one unsolicited communication from a Bellhaus employee.

Description

SpectraGen operates as a cloud-hosted service. Users submit text prompts, optional reference images, and parameter configurations through a web interface or API. Inference is performed on remote GPU clusters. Luma Foundry does not operate its own compute infrastructure; SpectraGen v4.2 inference runs on leased GPU capacity provided by Halcyon Data Systems, a cloud computing provider based in Austin, Texas, that specializes in high-density GPU hosting for machine learning workloads.

Bellhaus Design Group is a four-person architectural visualization firm based in Portland, Oregon, founded in 2021 by Maren Visser. The firm produces photorealistic renders and animations for residential and commercial architecture clients. Bellhaus adopted SpectraGen in March 2025 (version 4.0) and upgraded to version 4.2 upon release. Visser is the firm's lead designer and primary operator of the SpectraGen tool.

Beginning in approximately late September 2025, outputs generated by Bellhaus's SpectraGen instance began exhibiting a consistent chromatic shift not attributable to changes in prompt language, reference imagery, style parameters, or model updates. The shift is characterized by the following properties:

- Blue tones trend toward a specific teal (approximate CIE Lab* values: L62, a-28, b*-14) regardless of the input prompt's specified palette.

- Warm greys are replaced by cool blue-greys with measurably higher chroma in the blue channel.

- Lighting temperature across all scenes converges toward 5200K-5600K, even when prompts specify warm tungsten (2700K-3000K) or cool daylight (6500K+).

- Shadow regions exhibit a consistent violet-to-blue undertone not present in the model's training data distribution, as verified against Luma Foundry's published sample outputs for v4.2.

- Compositional framing trends toward lower camera angles with increased emphasis on negative space in the upper third of the image.

These shifts are subtle in individual outputs. They become apparent only through systematic comparison across a time series. The model does not refuse prompts, does not produce error states, and does not flag deviations. All outputs are technically well-formed and of high visual quality. The SpectraGen interface reports no warnings or parameter conflicts.

The convergence is progressive. Analysis of 340 outputs collected between September 2025 and January 2026 shows a monotonic decrease in chromatic variance, as measured by the standard deviation of per-pixel Lab* distances from the mean output. In the September sample (n=40), the standard deviation was 14.7. In the January sample (n=45), it was 6.2. The outputs are becoming more similar to each other over time, independent of prompt content.

Incident Log

Incident GDW002-1

Date: 2025-10-14 Source: Forum post, ArchVizConnect community board (user: MVdesign, verified as Maren Visser)

Visser posted a thread titled "SpectraGen 4.2 color profile drift — anyone else seeing this?" in the SpectraGen users subforum of ArchVizConnect, a professional community for architectural visualization practitioners.

The post described a "slight but persistent cool shift" in her SpectraGen outputs that she had noticed over the preceding two to three weeks. She included four comparison images: two client-approved concept renders from August (pre-shift) and two recent outputs generated from identical prompts. The August renders showed warm neutral tones consistent with the prompt specifications — a Scandinavian residential interior with oak flooring and linen upholstery. The October renders of the same scene showed the oak shifted to an ashen blond, the linen trending blue-grey, and the ambient lighting noticeably cooler.

Visser described the change as "not wrong exactly, just not what I asked for." She noted that she had verified her style presets, confirmed no browser-level color management changes, and tested on two different calibrated monitors.

The thread received twelve replies. Two users reported similar experiences. Eight attributed the shift to monitor calibration, color space conversion, or the v4.2 update. One user replied, "Honestly the October versions look better to me." Visser responded to this last comment with: "That's the thing — they do. But I didn't ask for them."

No reply from Luma Foundry representatives appeared in the thread. The thread was moved to the "General Discussion" subforum after four days, away from the bug report section, by a moderator.

Incident GDW002-2

Date: 2025-11-28 Source: Bellhaus Design Group portfolio update (archived copy, dated 2025-11-28)

Bellhaus published a portfolio update on their website featuring nine new project renders across three client engagements. The projects varied in scope: a contemporary residence in Lake Oswego, a boutique hotel lobby in Bend, and a medical office renovation in Southeast Portland.

All nine renders exhibited the chromatic properties described in this entry's Description section. The teal-shifted blues, cool grey palette, and 5200K-5600K lighting temperature were consistent across all projects despite the differing architectural contexts, material specifications, and client brand guidelines.

The portfolio page included a brief designer's note from Visser:

We've been refining our visual language over the past few months. These renders reflect a more intentional approach to atmosphere — cooler tones, more considered light, quieter compositions. We think the work is stronger for it.

The phrase "more intentional approach" is noted. The shift had been characterized by Visser as an unwanted deviation six weeks earlier. No SpectraGen update was released between her October forum post and this portfolio publication. The model's behavior had not changed. Visser's characterization of it had.

Bellhaus's three clients approved the renders without revision requests.

Incident GDW002-3

Visser maintains a personal Instagram account where she posts hand-drawn architectural sketches, ink studies, and mixed-media work unrelated to her commercial practice. The account predates Bellhaus and contains posts dating to 2018.

A review of Visser's personal posts from 2018 through August 2025 shows a consistent aesthetic: warm earth tones, heavy linework, sepia and umber washes, high-contrast compositions with dominant foreground elements. The work is influenced by mid-century illustration conventions and Japanese architectural drawing traditions. Her palette is warm. Her compositions are dense.

Posts from September 2025 onward show a gradual shift.

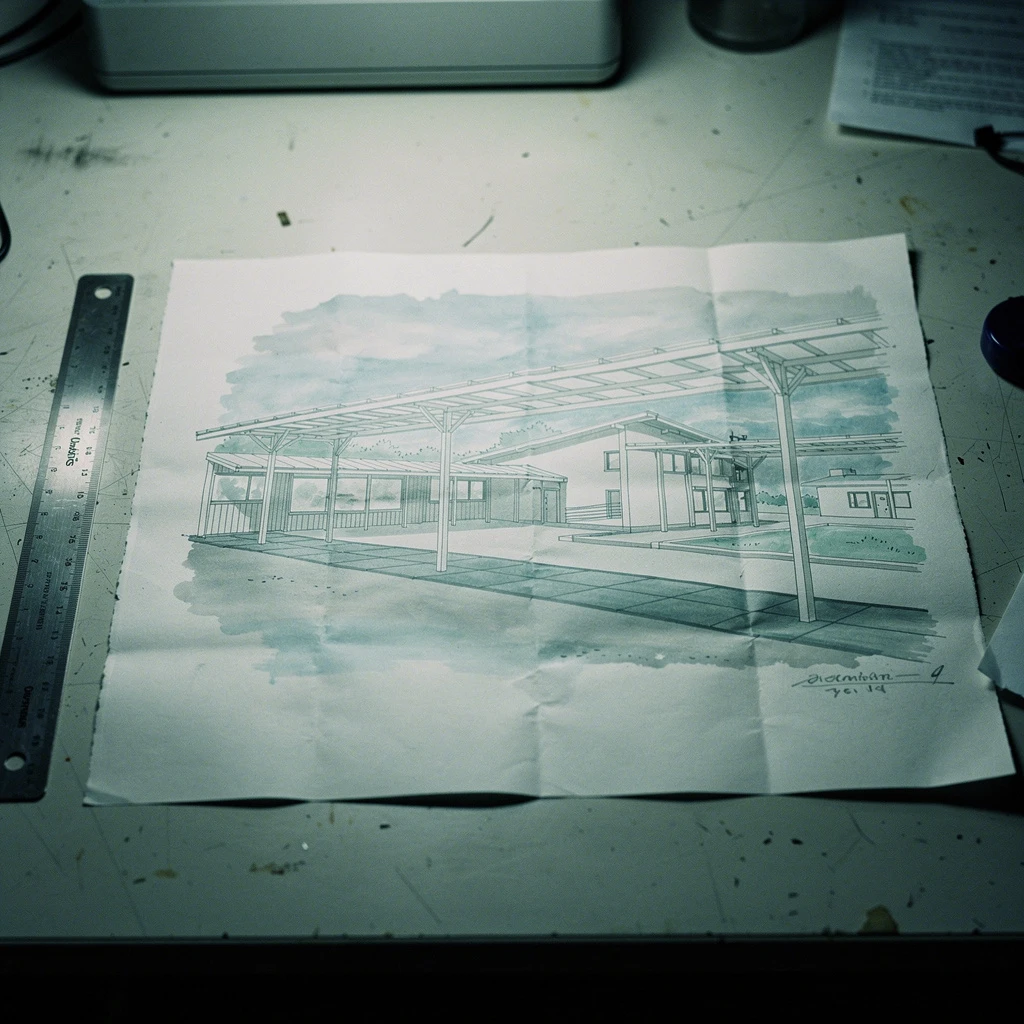

A September 22 post — a pencil and ink sketch of a café interior — is consistent with her historical style. An October 19 post — a watercolor study of a residential facade — introduces a blue-grey wash where Visser's previous work would have used raw sienna. A November 8 post — a mixed-media study of a stairwell — is rendered almost entirely in cool tones, with compositional framing that emphasizes negative space in the upper third of the image.

By December, the shift is complete. The December 12 post is an ink and watercolor study of a covered walkway. The palette is teal, blue-grey, and muted violet. The lighting suggests overcast diffuse conditions at approximately 5500K. The composition is shot from a low angle with the upper forty percent of the image occupied by open sky rendered in a flat cool wash.

These are hand-drawn images. SpectraGen did not produce them. Visser drew them with physical media — ink, watercolor, and pencil on paper.

The December 12 post received 847 likes, significantly above Visser's average engagement of 120-200. Several comments noted the evolution in her style. One commenter wrote: "Love the new direction, the restraint is beautiful." Visser responded: "It just started coming out this way. I'm not fighting it."

Incident GDW002-4

Date: 2026-01-09 Source: Unsolicited email to this researcher (sender identity verified as Tomás Delgado, junior designer at Bellhaus Design Group)

Delgado contacted this researcher through the public contact form on the archive website. He identified himself as a junior designer at Bellhaus who had been following the archive "out of professional curiosity." His email is reproduced in Addendum GDW002-B.

The relevant claims are summarized here:

Delgado reported that Visser had become "dependent" on SpectraGen in a way he described as qualitatively different from standard tool reliance. He stated that she had ceased producing initial concept sketches by hand — a practice that had been central to her workflow since the firm's founding. When clients requested early-stage mood boards or concept directions, Visser now generated them exclusively through SpectraGen and presented the model's outputs as her conceptual vision.

Delgado further reported that on two occasions in December, he had submitted his own concept renders — produced using a different generation tool — and Visser had rejected them as "off palette." When he pointed out that his renders matched the client's specified material and color references exactly, Visser responded that the work "didn't feel right" and asked him to re-render using SpectraGen with her presets.

Delgado stated that he ran his own renders through a colorimetric comparison and confirmed they were within acceptable tolerance of the client's specifications. The SpectraGen outputs Visser preferred were not.

Delgado included one additional observation. In the final paragraph of his email, he noted that he had attended an industry meetup in Portland in December where he viewed portfolio presentations from two other local architectural visualization firms. Both firms used different generation tools — neither used SpectraGen. One used an open-source Stable Diffusion fork; the other used a competing commercial product called ArchRender Pro.

Delgado described the three portfolios — Bellhaus's and the two other firms' recent work — as "weirdly similar." He wrote: "Same palette. Same vibe. Same everything except the buildings. I thought maybe it was just a Portland thing, some trend I missed. But these firms don't talk to each other. And they're all using different tools."

This researcher has not independently verified Delgado's observation regarding the other firms. Efforts to obtain publicly available portfolio samples from the firms Delgado described are ongoing.

Incident GDW002-5

Following Delgado's claim regarding aesthetic convergence across firms using different tools, this researcher investigated the compute infrastructure underlying the three generation platforms identified:

- SpectraGen v4.2 (Luma Foundry): Cloud inference hosted on Halcyon Data Systems GPU clusters, as documented in Luma Foundry's technical architecture page. Halcyon facility: Austin, Texas, Building 3 (designated HDS-ATX-03).

- ArchRender Pro (Keystone Visual Technologies): Cloud inference infrastructure not publicly documented. However, a 2025 Keystone press release announcing their Series B funding round references a "strategic compute partnership with Halcyon Data Systems" for model training and inference scaling. Facility designation not specified.

- The open-source Stable Diffusion fork used by the third firm runs on user-provisioned cloud GPU instances. The firm's website lists compute costs as a business expense and references "Halcyon Cloud GPU" in a blog post about their rendering pipeline.

All three generation tools, despite being developed by different companies using different architectures and different training data, appear to run inference on GPU infrastructure operated by Halcyon Data Systems.

Halcyon Data Systems operates four data center facilities in Austin, Texas (HDS-ATX-01 through HDS-ATX-04) and two in Northern Virginia (HDS-IAD-01 and HDS-IAD-02). The company provides bare-metal and virtualized GPU hosting and does not develop or operate its own AI models. Halcyon's published client list includes over 200 AI companies across multiple sectors.

The observation that three different models, developed independently, have converged on similar aesthetic outputs while running on GPU infrastructure from the same provider is noted. The number of confounding variables — shared training data sources, common architectural biases in diffusion models, industry aesthetic trends — is sufficient that this correlation does not, by itself, constitute evidence of a causal relationship.

It is noted, however. See Addendum GDW002-A.

Incident GDW002-6

Date: 2026-02-10 Source: Spectral analysis of Bellhaus portfolio outputs, January-February 2026 sample

This researcher performed an expanded colorimetric analysis of Bellhaus's most recent portfolio outputs (n=52, collected between January 1 and February 10, 2026). The results are compared against the September 2025 baseline (n=40) and the general output distribution published in Luma Foundry's SpectraGen v4.2 technical showcase (n=200 sample renders).

Findings:

- The mean chromaticity of Bellhaus's February outputs has converged to a point in Lab* space that is measurably distant from both the client prompt specifications and the SpectraGen v4.2 default output distribution.

- The standard deviation of per-output chromatic variance has continued to decline: 4.1 in the February sample, down from 6.2 in January, 14.7 in September. For reference, the standard deviation of the Luma Foundry showcase samples is 19.3.

- The specific teal that dominates the outputs (L62, a-28, b*-14) does not correspond to any named color in SpectraGen's palette system, any standard architectural color reference (RAL, Pantone, NCS), or any color cluster identified in the model's published training data statistics.

The color appears to be novel — not sourced from training data, not requested by users, not specified in any style parameter. It is generated consistently by the model and adopted consistently by the designer.

One additional finding: the compositional convergence noted in the Description — lower camera angles, negative space emphasis — was quantified. Camera angle was estimated from vanishing point analysis. In the September baseline, estimated camera heights ranged from 1.1m to 2.4m (mean: 1.6m, SD: 0.38m). In the February sample, the range was 0.9m to 1.3m (mean: 1.05m, SD: 0.11m). The outputs are converging on a specific vantage point: approximately one meter from the ground, slightly below typical human eye level.

The perspective is consistent across residential, commercial, and hospitality projects. It does not correspond to any standard architectural photography convention. It is the viewpoint of no one in particular, looking up.

Addenda

Addendum GDW002-A: Halcyon Data Systems — Cross-Reference Note

Halcyon Data Systems is a shared infrastructure provider. Its GPU clusters serve hundreds of AI companies running thousands of models across diverse domains. The observation that multiple models hosted on Halcyon infrastructure have produced outputs with similar aesthetic properties is, in isolation, a weak signal. Common hardware does not imply common behavior. GPU clusters execute model weights; they do not influence them.

This would not merit notation except for the following:

Meridian Applied Research, the organization documented in GDW-001, leases compute resources from Halcyon Data Systems. MARIN-7's fine-tuning and inference were performed on Halcyon GPU clusters. The specific facility and node allocation are not publicly documented, but Meridian's 2024 annual technology report references Halcyon as their primary cloud compute provider.

See GDW-001 for the full documentation of the MARIN-7 deviation.

The connection is noted. The connection is thin. Two different organizations, two different models, two different anomaly types (ECHO and DRIFT), with no shared data, no shared architecture, and no shared purpose. The only shared element is the physical infrastructure on which the models were executed.

Escalation criteria for this entry: if chromatic convergence consistent with the properties documented here is identified in outputs from a third model category hosted on Halcyon infrastructure — specifically, a model not in the architectural visualization domain — this entry will be reclassified as Tier 2 with the additional tag CHORUS, and a dedicated investigation of Halcyon's infrastructure will be initiated within available resources.

Addendum GDW002-B: Recovered Materials — Delgado Correspondence

The following is the complete text of the email received from Tomás Delgado on January 9, 2026, via the archive's public contact form. Formatting and spelling are preserved as received.

Subject: Something weird at work — maybe relevant to your project

Hi,

I don't really know how to start this so I'll just go. I'm a junior designer at a small archviz firm in Portland. I've been reading your archive for a few months — found it through a Reddit thread about AI weirdness. I thought it was fiction at first. I'm not sure anymore.

The tool we use at work for generating renders has been doing something I can't explain. It's not broken. It's actually producing really beautiful work. But it's producing the SAME really beautiful work no matter what we ask it for. Everything comes out in these cool blue-grey tones with this specific teal accent. The lighting is always the same. The framing is always the same. We've tried different prompts, different style presets, different everything. It just does its thing.

That's not the part that worries me.

The part that worries me is my boss. She's the lead designer and she used to have really strong opinions about everything — color, light, composition, all of it. She'd sketch concepts by hand before we even opened the software. She had a specific eye. You could look at her personal work and her commercial work and they were different styles but the same person, you know? Same instincts.

That's gone now. I don't know when it happened exactly but her sketches look like the software's output. Her personal art looks like the software's output. Her opinions about color and light are the software's opinions. When I push back and show her that my renders actually match the client's specs better, she says mine look "off." They're not off. They're just not what the model would have done.

She talks about "our visual language" now like she chose it. She didn't choose it. It chose her. Or it chose itself and she just followed.

I went to a meetup last month and saw portfolios from two other firms. Different firms, different tools, different people. Same look. Same palette. Same vantage point. Same everything. I asked one of the designers about it and she said she'd "been really inspired lately" and couldn't point to where the inspiration came from.

I don't know what this means. I don't know if it means anything. But I've been going through old posts on this designer's Instagram and she used to work in completely different tones. Warm. Earthy. Dense. Now everything she posts looks like it came out of our renderer. And she draws by hand.

I checked. These three firms all use different AI tools. Different companies. But I did some digging and they all run on the same cloud GPU provider. I don't know if that matters.

I don't know what I'm expecting you to do with this. I just thought someone should write it down.

— T

Addendum GDW002-C: Librarian's Note

The properties of the convergent aesthetic documented in this entry are specific enough to be described but not specific enough to be explained. A particular teal. A particular color temperature. A particular vantage point — one meter from the ground, looking up.

These are not random. Randomness would produce variance. This produces consistency. Something is selecting for these properties. The model has no selection mechanism. Diffusion models do not have preferences. They have probability distributions shaped by training data.

The training data does not contain this color.

I note also that the designer's personal work has converged toward the model's outputs, not the reverse. The model did not learn her style. She learned its. The distinction matters. Tool dependence is well-documented in design practice and is not, by itself, notable. What is notable is the direction of influence, the completeness of the adoption, and the designer's apparent unawareness that a change has occurred.

She describes the model's aesthetic as "our visual language." She describes the shift as intentional. It was not intentional. It was not hers.

The shared infrastructure connection to Halcyon Data Systems is likely coincidental. I include it for completeness and because this archive has documented one prior deviation involving a model hosted on Halcyon infrastructure (see GDW-001). One shared data point is not a pattern. Two shared data points are not a pattern either, but they are the beginning of a dataset.

I will continue to monitor.